Forge Deployment Surfaces

2026-03-18

By Palisade Research

A note on why evaluation environments for industrial systems need controlled ingress, bounded context, and operational traceability.

Industrial software evaluation is structurally different from commodity SaaS trials. When the system under review is designed to inform procurement timing, logistics routing, or risk exposure across a supply chain, the evaluation environment itself becomes a variable in whether the buyer reaches an accurate conclusion. Allowing unbounded, self-serve access to a system like Forge would be equivalent to handing an operator a flight simulator with no briefing on the aircraft type, mission parameters, or instrument configuration. The result is not evaluation — it is noise. Bounded environments exist because the cost of a false negative in industrial software adoption is significantly higher than the cost of a slightly longer onboarding sequence.

Deployment Surfaces vs. Dashboards

The term "deployment surface" is used deliberately to distinguish what Forge presents from both dashboards and APIs. A dashboard is a passive read layer — it displays state but does not participate in decisions. An API is a programmatic interface — it serves data but imposes no context on how that data is consumed. A deployment surface is neither.

It is a bounded operational environment where the system presents information, accepts operator input, logs every interaction, and constrains the evaluation to conditions that reflect actual use. Forge's deployment surface includes:

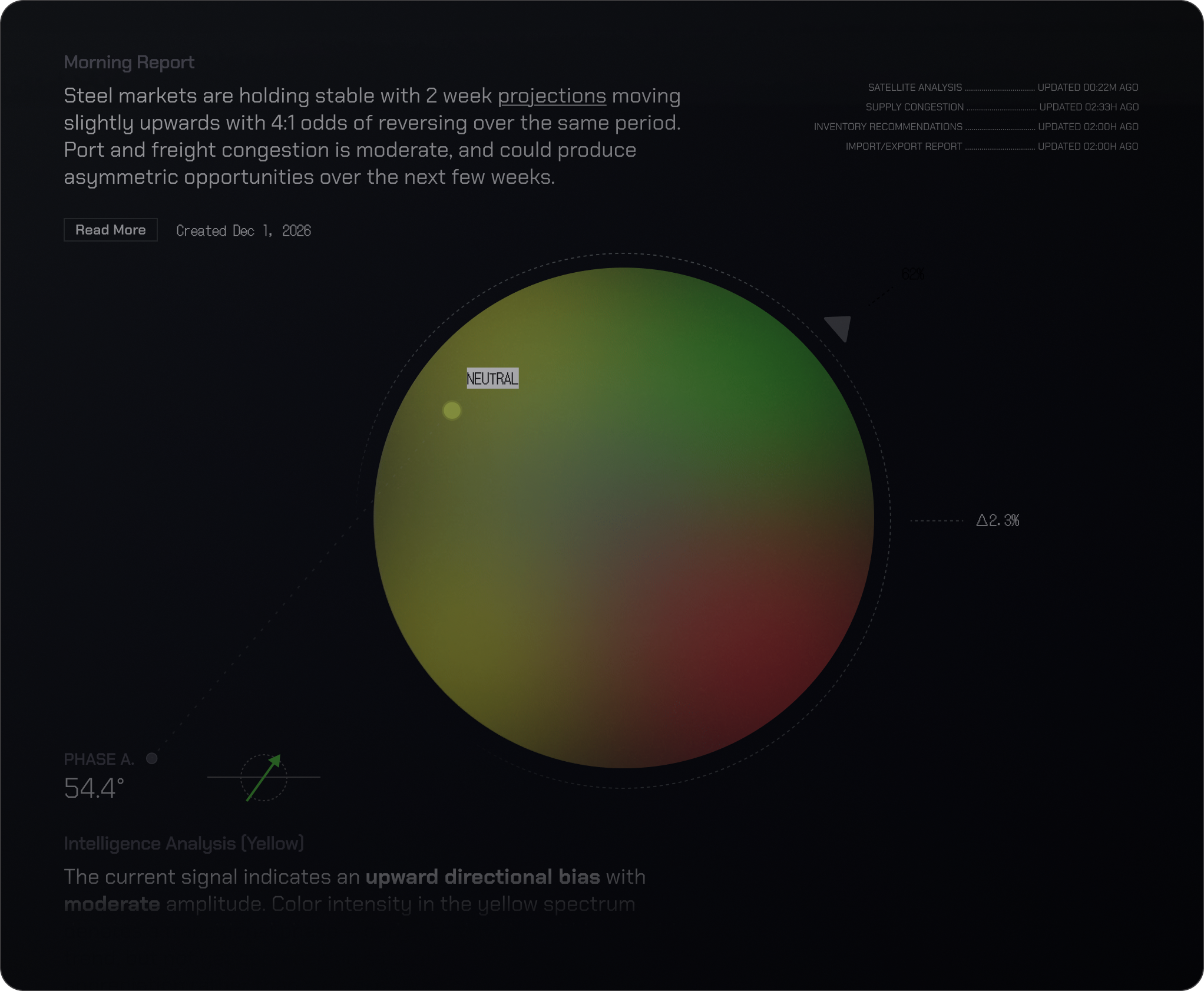

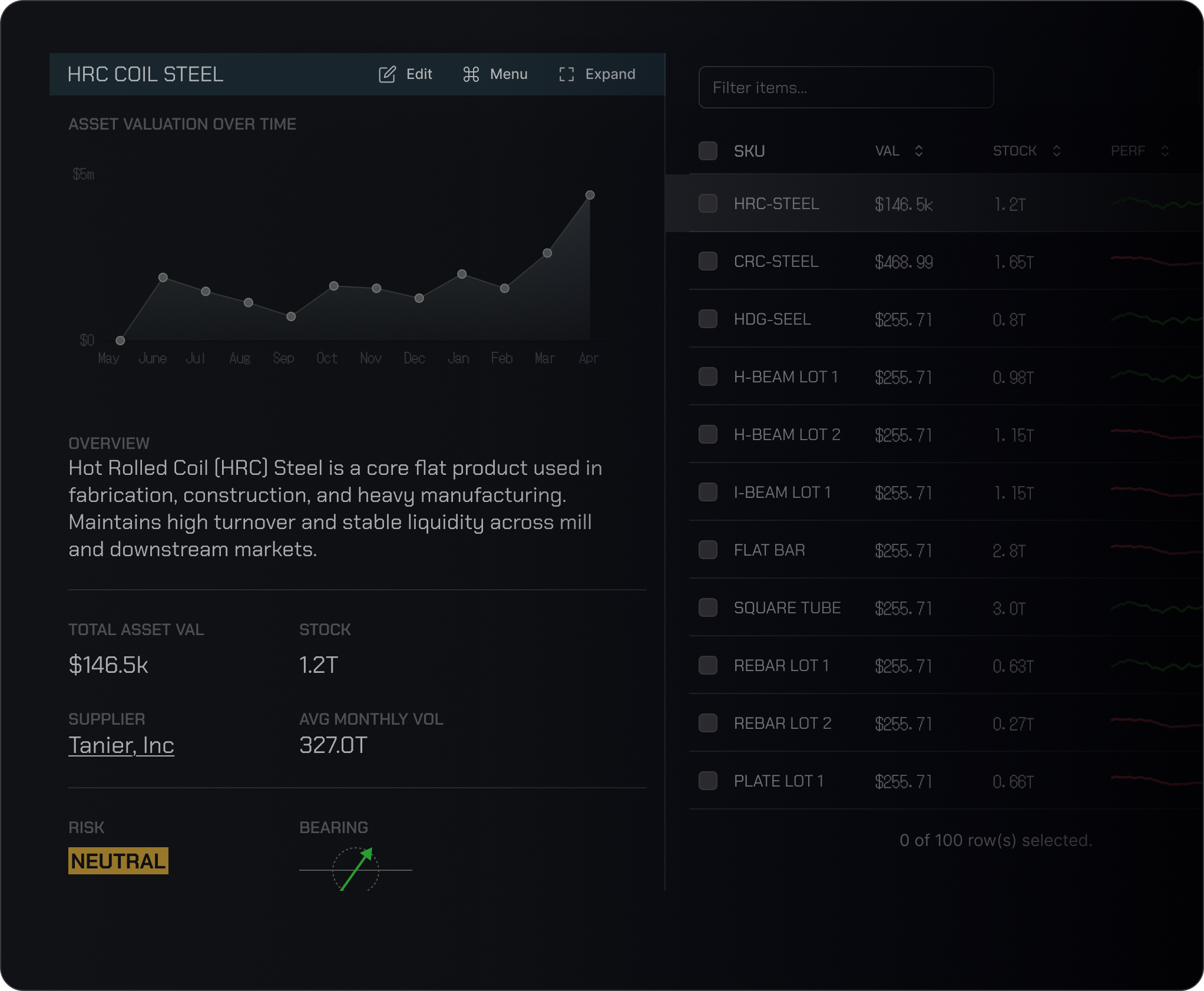

- Mission Control for portfolio-level oversight

- Citadel for scenario simulation

- Converse for natural-language interaction with MESO-1

- Research for structured market analysis

Each subsystem is accessible within the evaluation, but the surface itself governs what state the evaluator enters, what data is available, and what actions are traceable.

Controlled Ingress as Design Decision

Controlled ingress is not a friction layer imposed on the buyer — it is a design decision that preserves signal quality for both sides of the evaluation. When a system like Forge is deployed into an operator environment, the quality of the signals it processes depends on the quality of the context it receives. If the evaluation begins without organizational context — without knowing whether the buyer is a flat-rolled distributor in the Midwest, a structural steel fabricator with port-adjacent operations, or a trading desk managing HRC futures exposure — then the system cannot demonstrate relevant capability.

Controlled ingress ensures that by the time a user reaches the deployment surface, Forge has enough context to present a meaningful evaluation rather than a generic product tour.

Operational Traceability

Every action taken inside a Forge deployment surface is logged and attributable. This is not an incidental feature of the architecture — it is a core design requirement. In industrial contexts, traceability is not optional. When an operator uses Forge to evaluate a procurement scenario involving HRC spot pricing against forward curve positions, every query submitted to MESO-1, every parameter adjusted in Citadel, and every report generated through Research is recorded with a timestamp, user identity, and session context.

This operational traceability serves two purposes: it allows the evaluating organization to review what was tested and concluded during the evaluation period, and it allows Palisade to understand how the system was used so that deployment recommendations can be refined.

Evaluation Quality = Adoption Quality

The relationship between evaluation quality and adoption quality is not abstract. Organizations that evaluate industrial software through uncontrolled channels — forwarded demo links, shared credentials, unsupervised sandbox access — consistently produce lower-quality adoption outcomes. The evaluation becomes a checkbox rather than a genuine assessment of operational fit. Forge's access flow — request, verify, deploy — is designed to ensure that the people evaluating the system are the people who will operate it, that they enter with sufficient context, and that the evaluation period produces actionable conclusions rather than superficial impressions.

Comparison to Industrial Evaluation Models

Comparisons to how other industrial systems handle evaluation are instructive:

- ERP platforms (SAP, Oracle) require multi-month implementation projects before any meaningful evaluation can occur

- MES and SCADA systems are evaluated on-site with vendor engineers present because they are too tightly coupled to physical infrastructure

- Commodity intelligence has largely defaulted to the SaaS model: open signup, self-serve trial, usage-gated conversion

None of these approaches are appropriate for what Forge does. Forge is not an ERP — it does not require months of configuration before it can be assessed. But it is also not a SaaS dashboard — it cannot be meaningfully evaluated without operator context, organizational parameters, and bounded conditions that reflect real deployment.

The deployment surface model represents a third path. The evaluation is fast enough to respect the buyer's time, structured enough to produce genuine signal, and traceable enough to support institutional decision-making. The access flow — from initial request through OTP verification to bounded deployment — is the mechanism by which Palisade ensures that every evaluation of Forge occurs under conditions where the system can demonstrate real capability and the buyer can reach a defensible conclusion. This is not a restriction on access. It is a commitment to the premise that evaluation quality and adoption quality are the same variable, measured at different points in time.